George Jojo Boateng

CEO & Cofounder, Kwame AI Inc

May 15, 2026

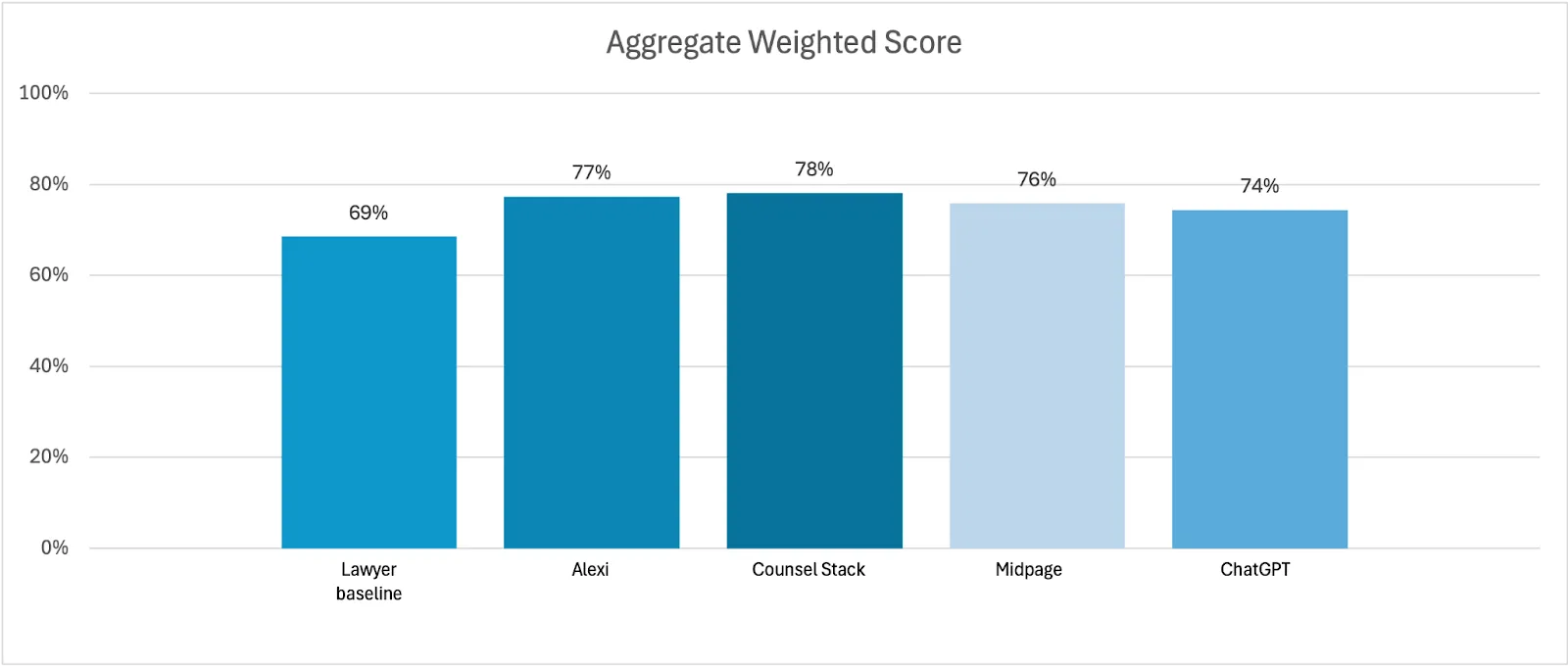

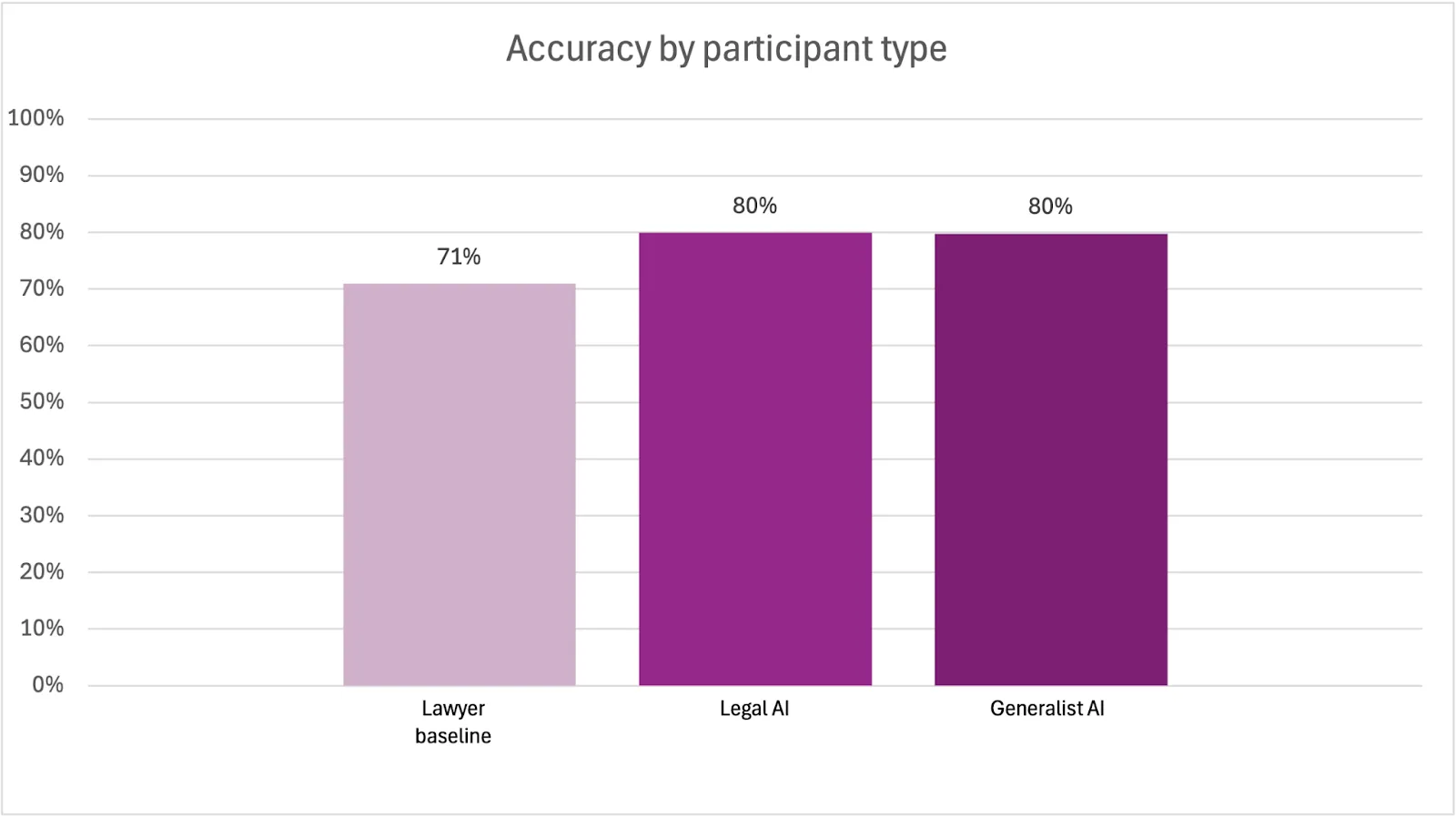

The legal profession is undergoing a seismic shift as artificial intelligence (AI) tools become increasingly sophisticated. A recent benchmarking study by Vals AI — Vals Legal AI Report (VLAIR) - Legal Research — published in October 2025, has thrown the spotlight on this transformation, comparing the performance of Legal AI, ChatGPT, and human lawyers on 200 legal research questions based on U.S. law. The results? Legal AI led the pack with an accuracy rate of 77%, followed by ChatGPT at 74%, and lawyers at 69%. But as always, the devil is in the details. Here are some insights from the report.

Accuracy: Legal AI vs. ChatGPT

One of my most surprising findings was the similar accuracy rates between Legal AI and ChatGPT. I suspect it’s because U.S. legal content is widely available online and likely forms part of ChatGPT’s training data. However, based on internal evaluations with Eskwai, our legal AI for African jurisdictions, the gap between specialized Legal AI and generic AI models like ChatGPT is expected to widen for other jurisdictions—such as those in Africa, where legal materials are less accessible online. In regions with limited digital legal resources, Legal AI’s advantage in accuracy could be much more pronounced.

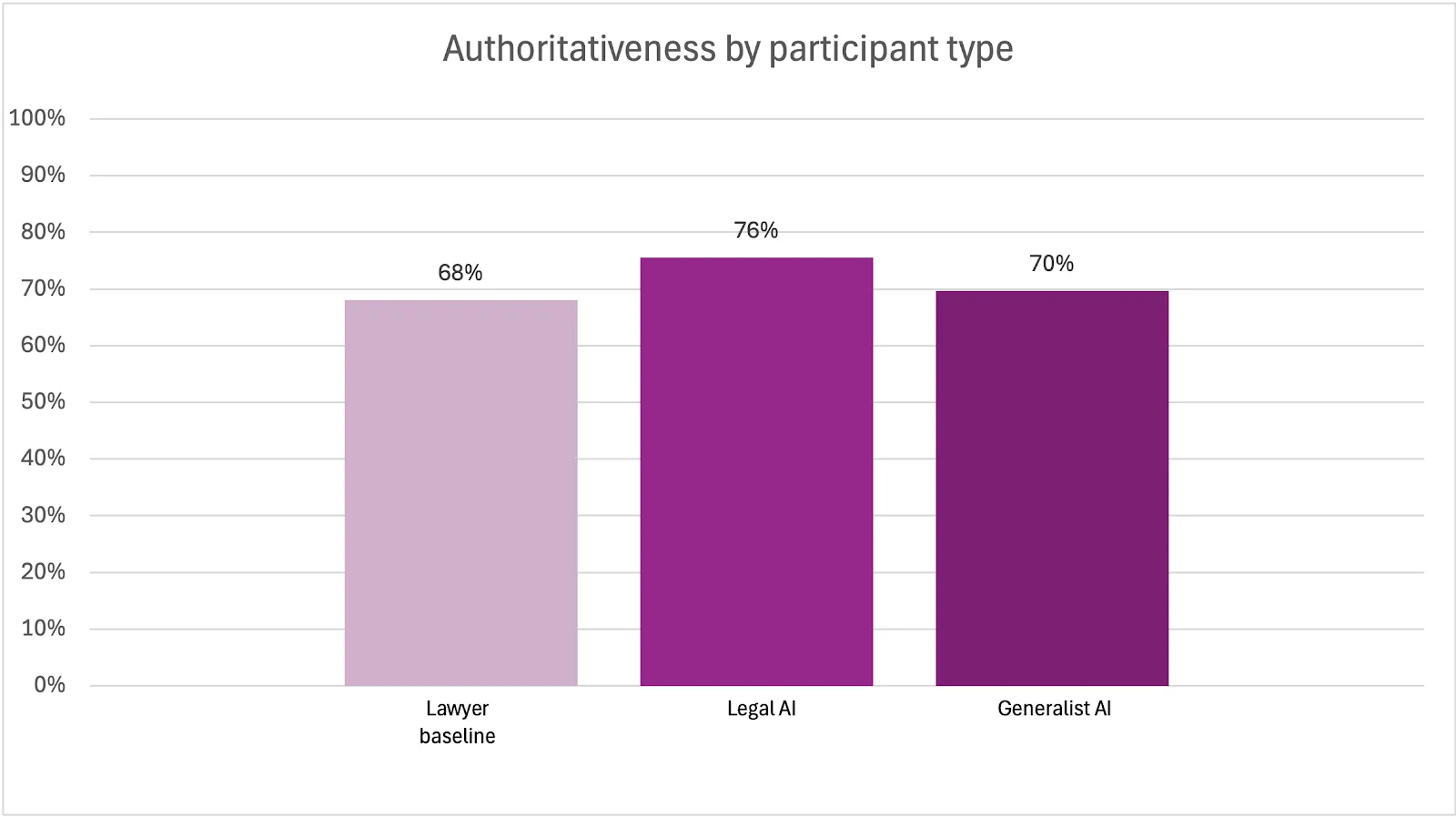

Authoritativeness: The Citation Gap

While both Legal AI and ChatGPT performed similarly on accuracy, Legal AI pulled ahead in authoritativeness, defined as the ability to support answers with citations to relevant and valid law. This is a critical metric in legal research, where the reliability of sources is paramount. Generic AI tools, lacking access to curated legal databases, are more prone to hallucinations—fabricating or misattributing legal authorities. The relatively small gap in authoritativeness between Legal AI and ChatGPT was unexpected, possibly attributable to ChatGPT’s access to web search during the evaluation or the comprehensive nature of its training data, which included U.S. cases and legislation.

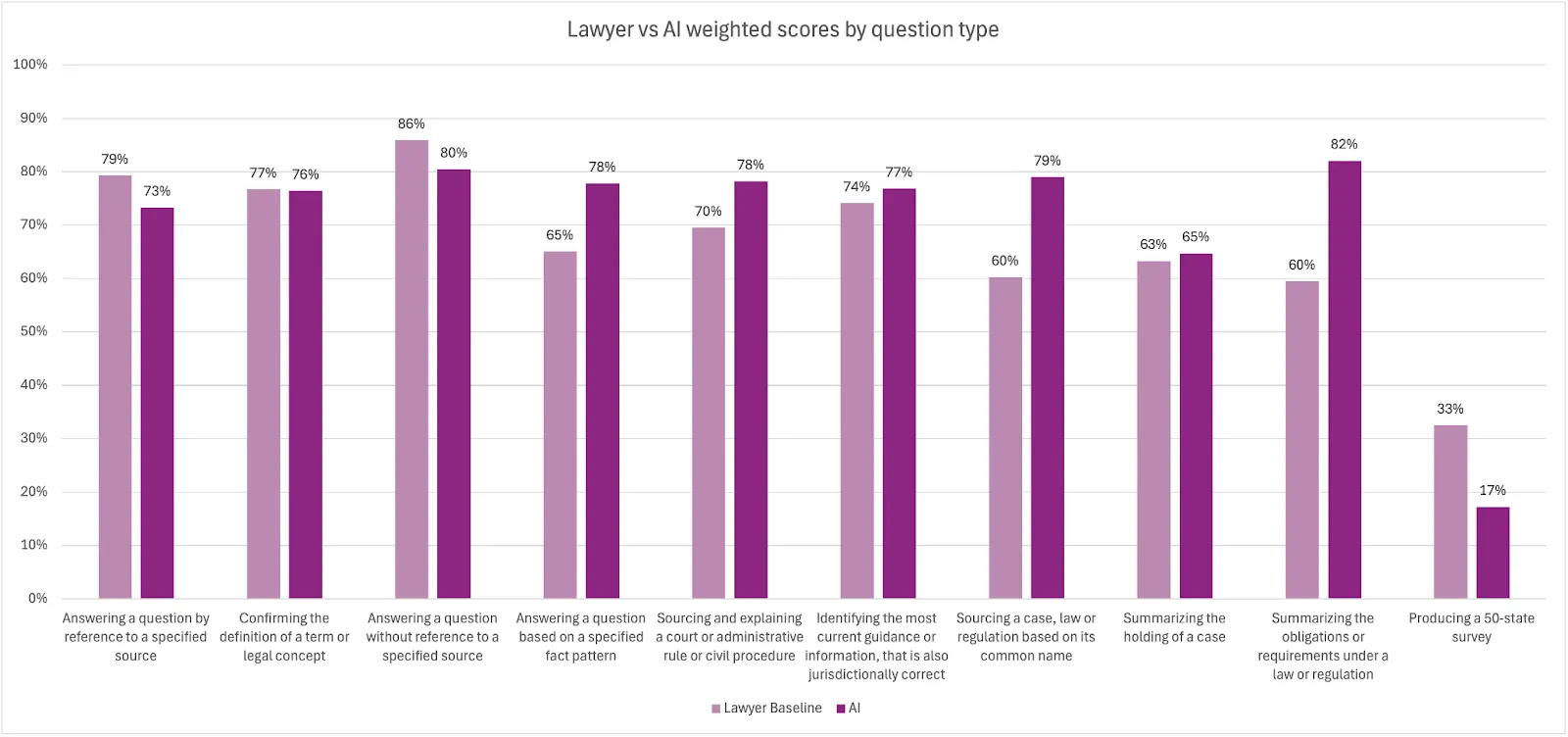

Lawyers’ Strengths: Nuance and Judgment

Despite performing worse than Legal AI overall, lawyers outperformed Legal AI in 4 out of 10 question categories. These categories required reasoning across multiple jurisdictions, a nuanced understanding of legal terms and concepts, and judgment-based synthesis. This highlights the strengths of human lawyers: the ability to interpret complex legal issues, apply context-sensitive reasoning, and exercise professional judgment. However, as AI models continue to evolve and more advanced pipelines are developed, this gap may narrow.

Pros of the Study

-

Comprehensive Benchmarking: This is the first public, comprehensive benchmarking of AI on legal research since the 2023 Stanford study on hallucinations in legal AI tools.

-

Human Evaluation: Unlike previous studies like Vals AI’s first legal AI study that relied on LLM-as-a-judge, this study used human evaluators—a significant improvement for assessing legal tasks, which is important for legal research in particular.

-

Transparent Methodology: The methodology was described in sufficient detail, with a well-articulated evaluation rubric that was used to grade the answers. While some gaps remain (see Cons), the transparency is commendable.

-

Inclusion of Generic AI: The study evaluated generic AI models like ChatGPT, which was not done in the previous Vals AI study. Future evaluations should include other models such as Claude, Gemini, and open-source alternatives.

Cons and Areas for Improvement

-

ChatGPT Version Disclosure: The study did not disclose which version of ChatGPT was used. In 2025, such omissions are glaring—versioning is crucial for benchmarking, as newer iterations may perform differently. It also provides transparency and gives the assurance that the best version of generic models is used for comparison.

-

Lawyer Seniority: The report did not specify the seniority level of participating lawyers (e.g., associates vs. partners), making it difficult to contextualize the lawyer baseline.

-

Vendor Transparency: Vendors were apparently allowed to “drop out” before publication if dissatisfied with their results. This option undermines the transparency and credibility of the study and should be eliminated in future iterations.

Looking Ahead: Jurisdictional Challenges and Opportunities

Overall, this Vals AI study is a step in the right direction. Given the jurisdictional nature of legal research, it is essential to replicate such benchmarking studies in regions beyond the Global North. The availability and accessibility of legal materials vary widely, and AI models trained on U.S. law may not perform as well in other jurisdictions. This gap is the reason we built Eskwai, trustworthy legal AI to address the jurisdictional needs of African lawyers.